|

|

1 år sedan | |

|---|---|---|

| .. | ||

| README.md | 1 år sedan | |

| faster-rcnn_r50_fpn_32xb2-1x_openimages-challenge.py | 1 år sedan | |

| faster-rcnn_r50_fpn_32xb2-1x_openimages.py | 1 år sedan | |

| faster-rcnn_r50_fpn_32xb2-cas-1x_openimages-challenge.py | 1 år sedan | |

| faster-rcnn_r50_fpn_32xb2-cas-1x_openimages.py | 1 år sedan | |

| metafile.yml | 1 år sedan | |

| retinanet_r50_fpn_32xb2-1x_openimages.py | 1 år sedan | |

| ssd300_32xb8-36e_openimages.py | 1 år sedan | |

README.md

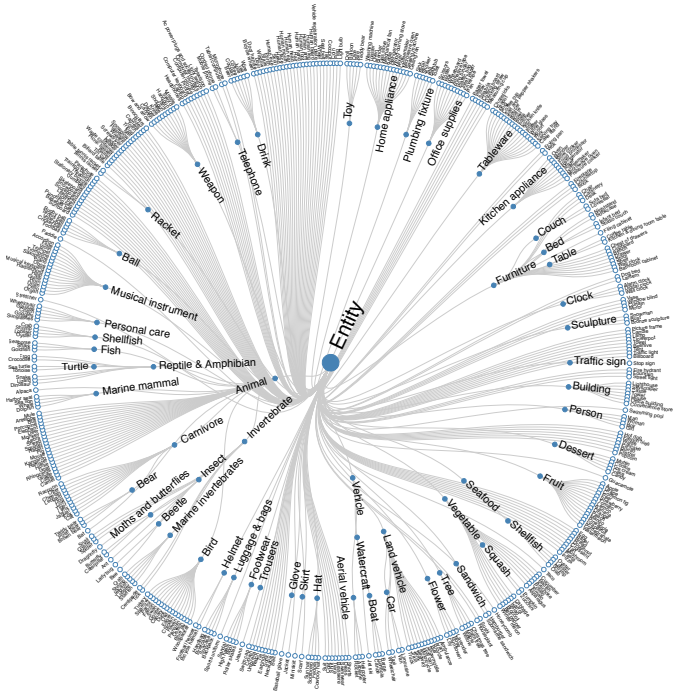

Open Images Dataset

Abstract

Open Images v6

Open Images is a dataset of ~9M images annotated with image-level labels, object bounding boxes, object segmentation masks, visual relationships, and localized narratives:

It contains a total of 16M bounding boxes for 600 object classes on 1.9M images, making it the largest existing dataset with object location annotations. The boxes have been largely manually drawn by professional annotators to ensure accuracy and consistency. The images are very diverse and often contain complex scenes with several objects (8.3 per image on average).

Open Images also offers visual relationship annotations, indicating pairs of objects in particular relations (e.g. "woman playing guitar", "beer on table"), object properties (e.g. "table is wooden"), and human actions (e.g. "woman is jumping"). In total it has 3.3M annotations from 1,466 distinct relationship triplets.

In V5 we added segmentation masks for 2.8M object instances in 350 classes. Segmentation masks mark the outline of objects, which characterizes their spatial extent to a much higher level of detail.

In V6 we added 675k localized narratives: multimodal descriptions of images consisting of synchronized voice, text, and mouse traces over the objects being described. (Note we originally launched localized narratives only on train in V6, but since July 2020 we also have validation and test covered.)

Finally, the dataset is annotated with 59.9M image-level labels spanning 19,957 classes.

We believe that having a single dataset with unified annotations for image classification, object detection, visual relationship detection, instance segmentation, and multimodal image descriptions will enable to study these tasks jointly and stimulate progress towards genuine scene understanding.

Open Images Challenge 2019

Open Images Challenges 2019 is based on the V5 release of the Open Images dataset. The images of the dataset are very varied and often contain complex scenes with several objects (explore the dataset).

Citation

@article{OpenImages,

author = {Alina Kuznetsova and Hassan Rom and Neil Alldrin and Jasper Uijlings and Ivan Krasin and Jordi Pont-Tuset and Shahab Kamali and Stefan Popov and Matteo Malloci and Alexander Kolesnikov and Tom Duerig and Vittorio Ferrari},

title = {The Open Images Dataset V4: Unified image classification, object detection, and visual relationship detection at scale},

year = {2020},

journal = {IJCV}

}

Prepare Dataset

You need to download and extract Open Images dataset.

The Open Images dataset does not have image metas (width and height of the image), which will be used during training and testing (evaluation). We suggest to get test image metas before training/testing by using

tools/misc/get_image_metas.py.

Usage

python tools/misc/get_image_metas.py ${CONFIG} \

--dataset ${DATASET TYPE} \ # train or val or test

--out ${OUTPUT FILE NAME}

- The directory should be like this:

mmdetection

├── mmdet

├── tools

├── configs

├── data

│ ├── OpenImages

│ │ ├── annotations

│ │ │ ├── bbox_labels_600_hierarchy.json

│ │ │ ├── class-descriptions-boxable.csv

│ │ │ ├── oidv6-train-annotations-bbox.scv

│ │ │ ├── validation-annotations-bbox.csv

│ │ │ ├── validation-annotations-human-imagelabels-boxable.csv

│ │ │ ├── validation-image-metas.pkl # get from script

│ │ ├── challenge2019

│ │ │ ├── challenge-2019-train-detection-bbox.txt

│ │ │ ├── challenge-2019-validation-detection-bbox.txt

│ │ │ ├── class_label_tree.np

│ │ │ ├── class_sample_train.pkl

│ │ │ ├── challenge-2019-validation-detection-human-imagelabels.csv # download from official website

│ │ │ ├── challenge-2019-validation-metas.pkl # get from script

│ │ ├── OpenImages

│ │ │ ├── train # training images

│ │ │ ├── test # testing images

│ │ │ ├── validation # validation images

Note:

- The training and validation images of Open Images Challenge dataset are based on Open Images v6, but the test images are different.

- The Open Images Challenges annotations are obtained from TSD. You can also download the annotations from official website, and set data.train.type=OpenImagesDataset, data.val.type=OpenImagesDataset, and data.test.type=OpenImagesDataset in the config

- If users do not want to use

validation-annotations-human-imagelabels-boxable.csvandchallenge-2019-validation-detection-human-imagelabels.csvusers can settest_dataloader.dataset.image_level_ann_file=Noneandtest_dataloader.dataset.image_level_ann_file=Nonein the config. Please note that loading image-levels label is the default of Open Images evaluation metric. More details please refer to the official website

Results and Models

| Architecture | Backbone | Style | Lr schd | Sampler | Mem (GB) | Inf time (fps) | box AP | Config | Download |

|---|---|---|---|---|---|---|---|---|---|

| Faster R-CNN | R-50 | pytorch | 1x | Group Sampler | 7.7 | - | 51.6 | config | model | log |

| Faster R-CNN | R-50 | pytorch | 1x | Class Aware Sampler | 7.7 | - | 60.0 | config | model | log |

| Faster R-CNN (Challenge 2019) | R-50 | pytorch | 1x | Group Sampler | 7.7 | - | 54.9 | config | model | log |

| Faster R-CNN (Challenge 2019) | R-50 | pytorch | 1x | Class Aware Sampler | 7.1 | - | 65.0 | config | model | log |

| Retinanet | R-50 | pytorch | 1x | Group Sampler | 6.6 | - | 61.5 | config | model | log |

| SSD | VGG16 | pytorch | 36e | Group Sampler | 10.8 | - | 35.4 | config | model | log |

Notes:

- 'cas' is short for 'Class Aware Sampler'

Results of consider image level labels

| Architecture | Sampler | Consider Image Level Labels | box AP |

|---|---|---|---|

| Faster R-CNN r50 (Challenge 2019) | Group Sampler | w/o | 62.19 |

| Faster R-CNN r50 (Challenge 2019) | Group Sampler | w/ | 54.87 |

| Faster R-CNN r50 (Challenge 2019) | Class Aware Sampler | w/o | 71.77 |

| Faster R-CNN r50 (Challenge 2019) | Class Aware Sampler | w/ | 64.98 |